How RPA and AI Work Together in Intelligent Automation

May 13, 2026

Computer Application Information and Research Institute

Artificial intelligence (AI) in robotics defines new ways organizations can use machines to optimize operations. According to a McKinsey report, AI-powered automation could boost global productivity by up to 1.4% annually, with sectors like manufacturing, healthcare, and logistics seeing the most significant transformation.

However, integrating AI into robotics requires overcoming challenges related to data limitations and ethical concerns. Also, the lack of diverse datasets for domain-specific environments makes it difficult to train effective AI models for robotic applications.

In this post, we will explore how AI is transforming robotic automation, its applications, challenges, and future potential. We will also see how Encord can help address issues in developing scalable AI-based robotic systems.

Artificial Intelligence (AI) and robotics are different yet interconnected fields within engineering and technology. Robotics focuses on designing and building machines capable of performing physical tasks, while AI enables these machines to perceive, learn, and make intelligent decisions.

AI consists of algorithms that enable machines to analyze data, recognize patterns, and make decisions without explicit programming. It uses techniques like natural language processing (NLP) and computer vision (CV) to allow machines to perform complex tasks.

For instance, AI powers everyday technologies, such as Google’s search algorithms, re-ranking systems, and conversational chatbots like Gemini and ChatGPT by OpenAI.

Robotics, however, focuses on designing, building, and operating programmable physical systems that can work independently or with minimal human assistance. These systems use sensors to gather information and may follow programmed instructions to move, pick up objects, or communicate.

The integration of AI with robotic systems helps them perceive their environment, plan actions, and control their physical components to achieve specific objectives, such as navigation, object manipulation, or autonomous decision-making.

AI improves a robot’s ability to perceive and interact with its surroundings. NLP, CV, and sensor fusion enable robots to recognize objects, speech, and human emotions. For example, AI-powered service robots in healthcare can identify patients, understand spoken instructions, and detect emotions through facial expressions and tone of voice.

While the discussion above highlights the benefits of AI in robotics, it does not yet clarify how robotic systems use AI algorithms to operate and execute complex tasks. The most common types of AI robots include:

AI-Driven Mobile Robots

An AI-based mobile robot (AMR) navigates environments intelligently, using advanced sensors and algorithms to operate efficiently and safely. It can:

AI-Powered Humanoid Robots

AI-based humanoid robots replicate the human form, cognitive abilities, and behaviors. They integrate AI to perform completely autonomous tasks or collaborate with humans.

These robotic systems combine mechanical structures with AI technologies like CV and NLP to interact with humans and provide assistance.

For example, Sophia is one of the most well-known AI-powered humanoid robots, developed by Hanson Robotics. Sophia engages with humans using advanced AI, facial recognition, and NLP. She can hold conversations, express emotions, and even learn from interactions.

AI is transforming the robotics industry, allowing organizations to build large-scale autonomous systems to handle complex tasks more independently and efficiently.

Key advancements driving such transformation include DL models for perception, reinforcement learning (RL) frameworks for adaptability, motion planning for control, and multimodal architectures for processing different types of information.

AI and Robotics: How Artificial Intelligence is Transforming Robotic Automation

Artificial intelligence (AI) in robotics defines new ways organizations can use machines to optimize operations. According to a McKinsey report, AI-powered automation could boost global productivity by up to 1.4% annually, with sectors like manufacturing, healthcare, and logistics seeing the most significant transformation.

However, integrating AI into robotics requires overcoming challenges related to data limitations and ethical concerns. Also, the lack of diverse datasets for domain-specific environments makes it difficult to train effective AI models for robotic applications.

In this post, we will explore how AI is transforming robotic automation, its applications, challenges, and future potential. We will also see how Encord can help address issues in developing scalable AI-based robotic systems.

Difference between AI and Robotics

Artificial Intelligence (AI) and robotics are different yet interconnected fields within engineering and technology. Robotics focuses on designing and building machines capable of performing physical tasks, while AI enables these machines to perceive, learn, and make intelligent decisions.

AI consists of algorithms that enable machines to analyze data, recognize patterns, and make decisions without explicit programming. It uses techniques like natural language processing (NLP) and computer vision (CV) to allow machines to perform complex tasks.

For instance, AI powers everyday technologies, such as Google’s search algorithms, re-ranking systems, and conversational chatbots like Gemini and ChatGPT by OpenAI.

Robotics, however, focuses on designing, building, and operating programmable physical systems that can work independently or with minimal human assistance. These systems use sensors to gather information and may follow programmed instructions to move, pick up objects, or communicate.

The integration of AI with robotic systems helps them perceive their environment, plan actions, and control their physical components to achieve specific objectives, such as navigation, object manipulation, or autonomous decision-making.

Why is AI Important for Robotics?

AI-powered robotic systems can learn from data, recognize patterns, and make intelligent decisions without requiring repetitive programming. Here are some key benefits of using AI in robotics:

Enhanced Autonomy and Decision-Making

Traditional robots use rule-based programs that limit their flexibility and adaptability. AI-driven robots analyze their environment, assess different scenarios, and make real-time decisions without human intervention.

Improved Perception and Interaction

AI improves a robot’s ability to perceive and interact with its surroundings. NLP, CV, and sensor fusion enable robots to recognize objects, speech, and human emotions. For example, AI-powered service robots in healthcare can identify patients, understand spoken instructions, and detect emotions through facial expressions and tone of voice.

Learning and Adaptation

AI-based robotic systems can learn from experience using machine learning (ML) and deep learning (DL) technologies. They can analyze real-time data, identify patterns, and refine their actions over time.

Faster Data Processing

The modern robotic system relies on sensors such as cameras, LiDAR, radar, and motion detectors to perceive their surroundings. Processing such diverse data types simultaneously is cumbersome. However, experts can use AI to speed up data processing and enable the robot to make real-time decisions.

Predictive Maintenance

AI improves robotic reliability by detecting wear and tear and predicting potential failures to prevent unexpected breakdowns. This is important in high-demand environments like the manufacturing industry, where downtime can be costly.

How is AI Used in Robotics?

While the discussion above highlights the benefits of AI in robotics, it does not yet clarify how robotic systems use AI algorithms to operate and execute complex tasks. The most common types of AI robots include:

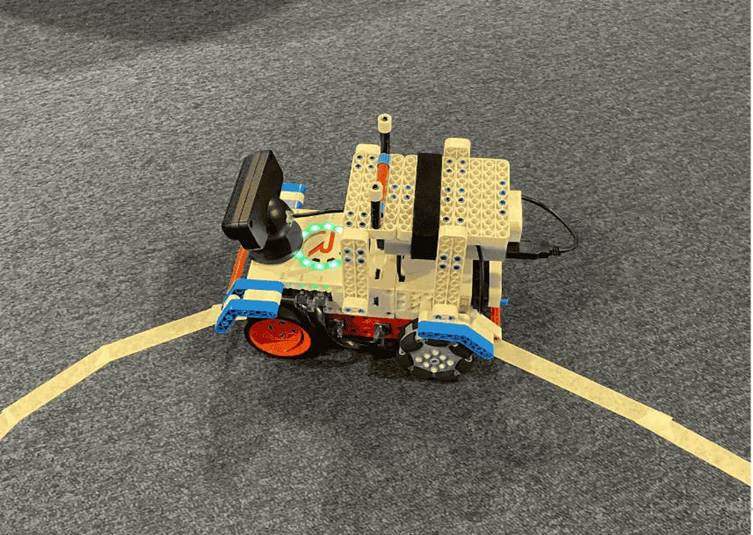

AI-Driven Mobile Robots

An AI-based mobile robot (AMR) navigates environments intelligently, using advanced sensors and algorithms to operate efficiently and safely. It can:

AMRs are highly valuable on the factory floor to improve workflow efficiency and productivity. For example, in warehouse inventory management, an AMR can intelligently navigate through aisles, dynamically adjust its route to avoid obstacles and congestion, and autonomously transport goods.

Articulated Robotic Systems

Articulated robotic systems (ARS), or robotic arms, are widely used in industrial settings for tasks like assembly, welding, painting, and material handling. They assist humans with heavy lifting and repetitive work to improve efficiency and safety.

Modern ARS uses AI to process sensor data, enabling real-time perception, decision-making, and precise task execution. AI algorithms help ARS interpret their operating environment, dynamically adjust movements, and optimize performance for specific applications like assembly lines or warehouse automation.

Collaborative Robots

Collaborative robots, or cobots, work safely alongside humans in shared workspaces. Unlike traditional robots that operate in isolated environments, cobots use AI-powered perception, ML, and real-time decision-making to adapt to dynamic human interactions.

Universal Robots’ UR Series is a good example of a cobot used in manufacturing. These cobots help with tasks like assembly, packaging, and quality inspection. They work alongside factory workers to improve efficiency and human-robot collaboration.

AI-Powered Humanoid Robots

AI-based humanoid robots replicate the human form, cognitive abilities, and behaviors. They integrate AI to perform completely autonomous tasks or collaborate with humans.

These robotic systems combine mechanical structures with AI technologies like CV and NLP to interact with humans and provide assistance.

For example, Sophia is one of the most well-known AI-powered humanoid robots, developed by Hanson Robotics. Sophia engages with humans using advanced AI, facial recognition, and NLP. She can hold conversations, express emotions, and even learn from interactions.

Learn about vision-based articulated robots with six degrees of freedom

AI Models Powering Robotics Development

AI is transforming the robotics industry, allowing organizations to build large-scale autonomous systems to handle complex tasks more independently and efficiently.

Key advancements driving such transformation include DL models for perception, reinforcement learning (RL) frameworks for adaptability, motion planning for control, and multimodal architectures for processing different types of information.

Let’s discuss these in more detail:

Deep Learning for Perception

DL processes images, text, speech, or time-series data from robotic sensors to analyze complex information and identify patterns. DL algorithms, like convolutional neural networks (CNNs), can analyze image and video data to understand its content. In contrast, Transformer and recurrent neural network (RNN) models process sequential data like speech and text.

A sample CNN architecture for image recognition

For instance, AI-based CV models play a crucial role in robotic perception, enabling real-time object recognition, tracking, and scene understanding. Some commonly used models include:

Reinforcement Learning for Adaptive Behavior

RL enables robots to learn through trial and error by interacting with their environment. The robot receives feedback in the form of rewards for successful actions and penalties for undesirable outcomes. Popular RL frameworks used in robotics include:

Multi-modal Models

Multi-modal models combine data from sensors like cameras, LiDAR, microphones, and tactile sensors to enhance perception and decision-making. Integrating multiple sources of information helps robots develop a more comprehensive understanding of their environment. Examples of multimodal frameworks used in robotics include:

Challenges of Integrating AI in Robotics

Advancements in AI are allowing robots to perceive their surroundings better, make real-time decisions, and interact with humans. However, integrating AI into robotic systems presents several challenges. Let’s briefly discuss each of them.

Greetings,

YRCAIRI TECH provides specialized training programs, including:

1) 1-month hands-on project training on TABLEAU,

2) 1-month project training on Data Analytics with Python/Power BI,

3) 3-month training with project on Java Full stack/.Net full stack,

4) 1-month Training on RPA,

5) 4 Hours Training on GIT & GITHUB, and

6) 1-month Training with project on MERN.

KEY FEATURES:

Live Online Sessions, Job Assistance, and Small Batch Sizes of 7-8 students maximum.

This will close in 20 seconds

This will close in 20 seconds